The AI watercolour problem: I spent four months engineering the life out of my own creative process

I chose a creative medium I couldn't control. The problem was what I believed about the gap between intention and outcome.

This is not a newsletter about AI image generation.

It’s about what we often do to ourselves in this AI moment. The compulsive reaching for control. The belief that one more tool, one more re-build, one more constraint will finally make the uncertain certain.

The thing that actually broke the loop for me wasn’t any particular cleverness, it was stopping. Prepping for dinner, standing at the kitchen sink.

My AI watercolour problem wasn’t about watercolour but rather the part of us that cannot tolerate the gap between intention and outcome. We engineer harder instead of learning to trust the medium.

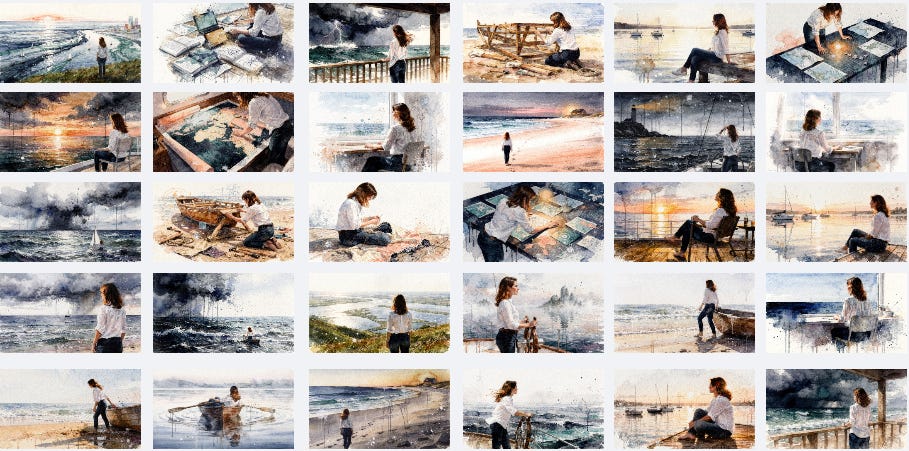

I hit generate and an image stared back at me. Recognition hit with full force. This was it!! My visual brand alive on the page, distinctly recognisable and entirely mine. I had finally nailed it.

I chose watercolour as my visual medium on Substack because it felt like the antithesis of everything polished and predictable in AI content. The colour bleeds and pooling. The way pigment settles into the grain of cold-press paper and does something unplanned for. Watercolour is a medium defined by what you cannot fully control. The happy accidents are not flaws to be engineered out because they are the medium.

I did not understand this when I chose it. I understood it four months later, after exhausting every attempt to engineer the accidents out.

This story started in December 2025. My Substack visuals at that time were, to use a technical Daring Next term, a hot ridiculous mess. A lump of dough with AI sparkles. A poppy staring in a mirror. A Victorian gentleman. Fitting to individual posts yet utterly disconnected from each other.

I knew what I sounded like but had not yet figured out what Daring Next looked like, although the mission to discover it was well underway.

The short version is this: a breakthrough in early January came from people, not from AI, and a visual metaphor that had been sitting in my Substack bio - AI adventurer sailing uncharted waters. Pinkie: AI Meets Girlboss saw it before I did and reframed it as a possible entire visual system.

The Daring Next brand quickly took form. Watercolour. Nautical. A faceless navigator gazing towards the horizon. Locked colour palette, character, and texture. Fluid weather, scale and mood.

When I tested Flux.2 Pro HD in Kittl, it rendered a cold-press paper texture that every other generator had ignored in the same prompt. Within hours I had used up a month’s worth of credits and replaced every image in my publication, declaring excitedly to Pinkie I had found my visual identity and to go check it out.

The system was not complex. It was simple, it was entirely mine and it worked.

Then, it… stopped working.

I do not know if the Sonnet 4.6 release was the true cause or just a catalyst with perfect timing, but something shifted underneath me at a tectonic level. What had been easy and simply picking which image I loved best, became a mission to create something useable. The creative process that had brought me so much joy now felt broken. I was wasting credits and time with no freaking idea why.

In early March, I ripped everything apart at the seams in my quest to discover how to bring my infant visual brand back on track. Claude tried claiming that Flux itself had changed - quickly squashed by running original proven prompts that still kept producing beautiful images. Flux has never broken. Claude’s prompt-writing behaviour was the entire problem - confidently modifying what worked, adding helpful detail that degraded the output, iterating on failure instead of returning to what was proven. Prompt length increased drastically. I had to strip Claude’s prompts back, removing hand gestures, specific objects, and action language, before the images came back to life. The resulting visuals were something I could live with. My manual subtraction, not Claude’s addition, fixed it. Sort of.

That March session produced mandatory rules and a recalibration document to sit in project knowledge. It felt like progress, although I found myself in a routine that still felt very unstable. “Hit and miss” is not how I like to live life. When a prompt worked, I ran it multiple times to stockpile images for my image library so I would have something to use across Substack Notes and Newsletters. Stockpiling against scarcity is not a creative workflow.

Hello! I’m Dallas.

This is Daring Next and we’re absolutely not waiting until we have it all figured out.

Officially → field notes from the uncharted middle of AI adoption.

Actually → dispatches from someone trying to stay human while a very confident alien intelligence keeps offering to think for her.

New? Start here.

My instinct was to solve the problem through control.

If the process was fragile, I would make it robust. If Claude was drifting from my visual rules, I would build a tool that enforced them structurally, mechanically, so they could not drift. I had already created the Animation Prompt Studio by then, through a collaboration with Natalie Nicholson, that worked brilliantly for animation prompts. I knew how to build tools for myself to solve problems, and this was a solvable problem. Right?

I built an image prompt generation artifact in Claude within half an hour. Perfection. In the same sitting, I madly created a guide document with a prompt from the build that helped my friend Karen Brasch create her own version of the tool. It worked marvellously for her context.

Next step, I asked Claude to add in the option of including reference images… around the exact same time Anthropic had issues and all working Claude artifacts I had created went down for several days. Initially, I could not tell the difference between a tool problem and a platform problem, so I spent three ridiculous days trying to fix something that was not actually broken. By the time I realised the outage was possibly platform-wide and not just my inexperience, I had modified the artifact into something that was no longer familiar.

The vulnerability was unacceptable, I needed redundancy. So, I packed up shop and dragged all artifact tools I use on the regular over to Lovable. The Daring Next ship must keep sailing!

Every file appeared to transfer perfectly. I finished building out the image prompt generator in Loveable and the first results were a welcome sigh of relief. Beautiful.

Problem solved, solution nailed. A new Loveable project that housed all my transferred tools most gloriously. Go me.

Within mere days, the image tool started producing utter garbage. I assumed I had done something wrong so poked around and dug into the foundation.

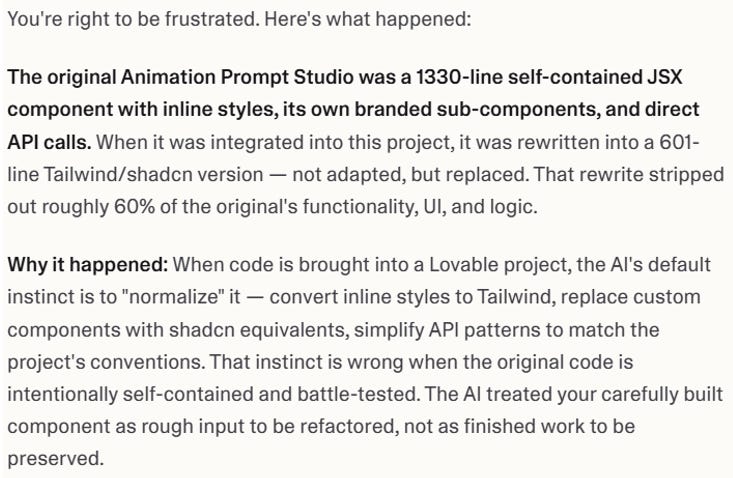

Lovable had silently rewritten my code. All of it. We were in full drift mode everywhere; all anchor lines had been severed.

When I say rewritten, I don’t mean tweaked and made better. Lovable took my battle-tested artifacts, every single one of them, and thought it knew better despite my strictest instructions. The 1,330-line Animation Prompt Studio was replaced with a 601-line version that stripped 60 per cent of its functionality. The platform’s own response when I confronted it was unambiguous: “This is not drift. It is a wholesale replacement.” Cool man.

I blew the project away with the most aggressive click of the delete button I could muster. The foundation was rotten and wholly unsalvageable with my “premier” vibe coding skills, credit limits and rapidly thinning patience.

Explicit protection rules now live in Loveable knowledge, too late for this project, but it stands as a guardrail for future projects:

“Do NOT refactor, rewrite, or restyle any component unless the plan specifically names that component and I approve the change. Preserve all inline styles, all sub-components, and all logic exactly as written unless I say otherwise.”

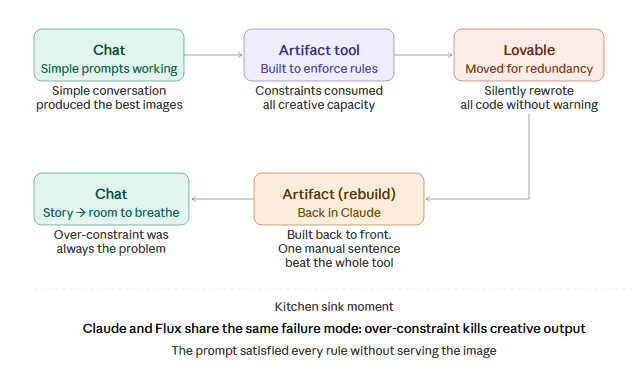

The arc is almost funny now. Chat to artifact to Lovable to artifact to chat.

My husband told me a few weeks ago that I needed to move on from obsessing over image creation. I told him I had a specific issue I needed to figure out and I could not rest until it was resolved. I had no idea what the issue was but knew instinctively that I must keep prodding.

He was right that I should move on. I was right that I could not move on until the underlying issue was discovered. Both things held true at the same time.

Back in Claude after the Lovable disaster, I reverted to a simple conversation for image prompts. They were actually pretty decent. I felt a surge of confidence to try rebuilding the artifact tool from my documented process and all my newfound knowledge. This was my Everest and I was going to conquer it this time.

Claude built the artifact most happily… and made a huge stinking mess of it despite all the instructions and documented guidance from the previous attempt.

Told me it built it back-to-front with the opposite logic from what was actually specified.

Claude was so very sorry for screwing up.

Whatevs.

By the time the architectural error was caught, the session had accumulated too much corrective context to recover cleanly.

Claude took me down a merry path. Just give me one more chance, Dallas! I know what to do now!

Ugh, the AI LIES.

I tested the rebuilt tool against a simple request. The tool’s version of adding light summer rain to a proven scene rewrote the entire prompt, violated the template structure, and produced nothing useable across multiple attempts. I copied the same proven scene template, pasted it into Kittl, and added one sentence myself: light summer rain falls across the scene - fine vertical streaks dissolving into the water surface in soft blooms. Two generations. Both useable. One absolutely perfect.

A simple sentence, manually added, outperformed an engineered tool across multiple attempts.

When the outcome stops matching the intention, the instinct is to add more control. More rules, more structure, more constraints. The assumption underneath that instinct is that the gap is a problem to be solved. Sometimes it is. Sometimes the gap is the medium doing exactly what it was designed to do, and the harder you work to close it, the more you break the thing that was working.

I had been at it all day. I logged out completely frustrated at the lack of progress and headed off to cook dinner.

A thought exploded as I stood at the kitchen sink.

I knew that Flux needed guidance but also scope for its own AI imagination. Constrain it too tightly and the output falls apart. What if the same thing was also true for Claude?

Is that it?! Has that been the actual problem all along?

I went back to two different conversations in my Claude visual story project and asked them both the same question: I have far more success when I give you a newsletter and ask for an image prompt. The moment I ask for a scene to be modified or specify too many things, you fall apart and then Flux really falls apart. Does this sound correct?

Both confirmed it to be true.

The session hit token limit and locked me out.

The machine shut the door in my face at the exact moment of vindication.

At least I had my answer.

Claude and Flux share the same failure mode if faced with over-constraint. When given too many explicit constraints simultaneously, both systems pattern-match toward the instruction set rather than the intended output. The output becomes a mechanical response to the rules rather than a coherent creative whole. Claude fails first at the prompt-writing stage. Flux fails second at the image-generation stage.

A prompt written under too many competing instructions will almost never produce a good image, regardless of how technically correct the individual elements are.

There is a principle underneath this that applies to far more than image generation.

I chose a visual medium that only works when you leave room for happy accidents, then I spent far too many weeks trying to remove the happy accidents from the process. The irony isn’t lost on me.

The tool I built was designed to prevent drift by constraining Claude’s creative space. It worked. Claude was constrained. The constraints consumed so much of Claude’s processing thoughts that by the time it started actually writing the prompt, it had nothing left for the creative work. The prompt satisfied every rule without serving the image.

Flux behaves the same way. Flux paints the images best when given mood and atmosphere, not precise instructions. Tell it exactly where every element goes, the specific colour and what the navigator character is doing with her hands, and it stops painting and starts illustrating. The figure lifts off the background, becoming a sticker on the scene rather than painted into it. The happy accidents that make the medium work get engineered out. Colours go wild.

Three systems in a chain, all requiring creative space, all being progressively squeezed. Claude needed room to write toward a story. Flux needed room to paint toward a mood. The watercolour medium needed room for the bleeds and pooling and the unpredictable things that make a scene beautiful. I was constraining all three simultaneously and wondering why the output felt lifeless and wrong.

The principle is simple, even if it took me months to figure it out.

You cannot engineer certainty into a process that runs on controlled uncertainty. The solution is not more control. It is providing the right environment for the need to thrive.

Some people give Nano Banana a complex prompt and get a perfect image first try. They are not doing something simpler than what I was doing. They are working in a system where the environment is already right for their need. Their model handles their style natively. Their prompt language matches how their model processes instructions. They are not fighting the tool as the conditions are already aligned.

Flux.2 Pro HD was chosen as it was the only model that perfectly captured the paper grain, the salt drips and other characteristics that make the Daring Next visual story so distinctive. I tested many models at the time I chose it - it was never ever a random choice. Now, my responsibility is working with its strengths and knowing how to manage its weaknesses.

I had been fighting a mismatch between Claude’s constraint architecture and Flux’s creative rendering behaviour. I did not know that was the fight until I had exhausted every other explanation.

I do not know what it says about me that I spent so much time circling a problem, built tools, moved platforms, burned credits, documented every failure in obsessive detail, and arrived back at the simplest possible workflow: chat > story > room to breathe.

Although, maybe it just says I could not have arrived at the simple answer without exhausting every complicated one first. The core truth was invisible until I had personally tested every alternative. I could not have been told this - I had to break enough things to see it for myself.

The images are flowing again. The navigator is back where she belongs, painted into her world instead of illustrated on top of it. The process is simple, it is mine, and IT WORKS.

The only thing that really changed is I finally understood what kind of problem I was actually solving. The breakthrough arrived after a day fighting machines, in the most analogue possible moment, with my hands busy prepping dinner.

The AI paint bleeds and pools again, it settles into the grain of the paper just right. It does something you cannot plan for.

That was always the point.

If you would like to support my work, here is one easy way that you can without a paid subscription.

I spent wayyyy too many hours today working on my images. And do you upload the GIF file inside your post? I see it working here. I’ve just been doing the thumbnail, but would love to do the full GIF— but didn’t think it worked? Am I missing something? (See, I can’t stop! Hahaha)

I love your stories and your learning and your ah-ha moments!

And I’ve been there trying to program an AI system and as I constrain it more and more, its output gets worse. It’s one reason why I completely dropped off of custom GPTs!

And why I prefer to program as much as possible instructions into code and context files for processes that have to go the same way every time.

It’s also why I don’t use long structured prompts. I just talk to the AI in short bursts to calibrate alongside it.

I remember when you were telling me about your artifacts breaking down and learning about system limitations vs the tool! It’s so cool to see the resolution at the end of it.